Table of Contents

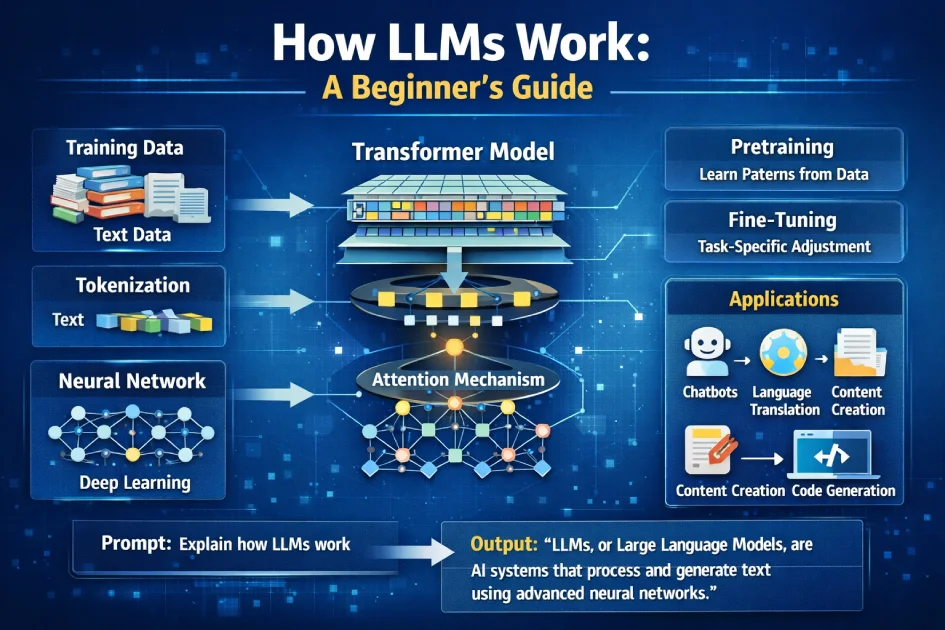

In recent years, Large Language Models (LLMs) have transformed the way humans interact with technology. From chatbots and virtual assistants to content creation and coding support, LLMs are powering a new era of artificial intelligence. As part of the broader Next Gen AI ecosystem, LLMs play a crucial role in enabling intelligent automation and human-like communication.

But How LLMs Works? This beginner-friendly guide will break down the fundamentals of LLMs in simple terms while covering key concepts like machine learning, neural networks, and natural language processing.

What Are Large Language Models (LLMs)?

Large Language Models (LLMs) are advanced artificial intelligence systems trained to understand and generate human language. They are built using deep learning techniques and are designed to process massive amounts of text data.

LLMs can:

- Generate human-like text

- Answer questions

- Translate languages

- Summarize content

- Assist with coding and research

Popular examples of LLM applications include chatbots, AI writing tools, and voice assistants.

How LLMs Work?

At their core, LLMs work by predicting the next word in a sentence based on context. While this may sound simple, the process involves complex mathematical models and massive datasets.

1. Training on Massive Datasets

LLMs are trained on huge amounts of text data collected from books, websites, articles, and other sources. This training helps the model learn:

- Grammar and syntax

- Context and meaning

- Relationships between words

The more data the model processes, the better it becomes at understanding language patterns.

2. Tokenization: Breaking Down Text

Before training begins, text is broken into smaller units called tokens. Tokens can be:

- Words

- Subwords

- Characters

For example:

- “Artificial Intelligence is powerful” → [“Artificial”, “Intelligence”, “is”, “powerful”]

Tokenization allows LLMs to process and analyze text efficiently.

3. Neural Networks and Deep Learning

LLMs are powered by neural networks, specifically a type called transformer models. These models mimic how the human brain processes information.

Key components include:

- Layers of neurons

- Weighted connections

- Activation functions

The model adjusts these weights during training to improve accuracy.

4. The Transformer Architecture

The transformer architecture is the backbone of modern LLMs. It introduced a mechanism called attention, which allows the model to focus on relevant parts of a sentence.

For example:

In the sentence “The cat sat on the mat because it was tired,” the model understands that “it” refers to “the cat.”

This ability to track context across long sentences makes transformers highly effective.

5. Self-Attention Mechanism

Self-attention helps the model evaluate the importance of each word in a sentence relative to others.

Benefits include:

- Better context understanding

- Improved accuracy

- Ability to handle long text sequences

This is one of the key innovations that made LLMs so powerful.

6. Training Process: Pretraining and Fine-Tuning

LLMs go through two major training phases:

Pretraining

- The model learns general language patterns

- Uses unsupervised learning

- Processes billions of words

Fine-Tuning

- The model is refined for specific tasks

- Uses smaller, targeted datasets

- Improves performance for real-world applications

Next Gen AI and LLMs

Large Language Models are a core component of Next Gen AI, representing a shift from rule-based systems to intelligent, learning-driven architectures.

Unlike traditional AI, which relies on predefined logic, Next Gen AI systems use advanced deep learning techniques to adapt, learn, and improve over time. LLMs are at the forefront of this transformation, enabling machines to understand and generate human language with remarkable accuracy.

To explore this evolution in detail, check out our article on Next-Gen AI Advancements in the IT Industry, where we break down how AI is reshaping businesses and technology landscapes.

Additionally, if you want a broader understanding of how these technologies fit together, visit our Next Gen AI homepage for more insights and resources.

Why Are LLMs So Powerful?

LLMs have gained popularity due to their ability to generate human-like responses and adapt to multiple tasks.

Key Advantages:

- Scalability: Larger models perform better with more data

- Versatility: One model can handle multiple tasks

- Context Awareness: Understands meaning beyond keywords

- Automation: Reduces manual effort in content creation

Common Use Cases of LLMs

LLMs are widely used in content creation, including blog writing, copywriting, and social media posts. These capabilities are a direct result of innovations in Next Gen AI, which focuses on building smarter and more adaptive AI systems. You can learn more about this in our detailed guide on What is Next Gen AI.

LLMs are used across industries for various applications:

1. Content Creation

- Blog writing

- Copywriting

- Social media posts

2. Customer Support

- AI chatbots

- Automated responses

- Help desk systems

3. Language Translation

- Real-time translation

- Multilingual communication

4. Education

- Tutoring systems

- Study assistance

- Research summarization

5. Software Development

- Code generation

- Debugging support

- Documentation writing

Limitations of LLMs

Despite their capabilities, LLMs have certain limitations:

1. Lack of True Understanding

LLMs do not “think” like humans. They rely on patterns rather than genuine comprehension.

2. Bias in Training Data

If training data contains biases, the model may produce biased outputs.

3. Hallucinations

LLMs sometimes generate incorrect or misleading information.

4. High Resource Requirements

Training LLMs requires significant computational power and energy.

Future of Large Language Models

The future of LLMs looks promising as advancements continue in AI research.

Emerging trends include:

- More efficient models

- Improved accuracy

- Better alignment with human values

- Integration with real-world applications

As technology evolves, LLMs will become even more accessible and powerful.

Conclusion

Understanding how LLMs work doesn’t require a technical background. At a basic level, they are powerful tools that learn language patterns from vast amounts of data and use that knowledge to generate meaningful text.

From tokenization and neural networks to transformers and attention mechanisms, each component plays a crucial role in making LLMs effective. While they have limitations, their potential continues to grow rapidly.

Whether you’re a student, developer, or business owner, learning about LLMs is an essential step toward understanding the future of artificial intelligence.